In this post I'm going to be discussing this article, but mostly its dataset:

Néma, J., Zdara, J., Lašák, P., Bavlovič, J., Bureš, M., Pejchal, J., & Schvach, H. (2023). Impact of cold exposure on life satisfaction and physical composition of soldiers. BMJ Military Health. Advance online publication. https://doi.org/10.1136/military-2022-002237

The article itself doesn't need much commentary from me, since it has already been covered by Stuart Ritchie on Twitter here and in his iNews column here, as well as by Gideon Meyerowitz-Katz on Twitter here. So I will just cite or paraphrase some sentences from the Abstract:

[T]he aim of this study was to examine the effect of regular cold exposure on the psychological status and physical composition of healthy young soldiers in the Czech Army. A total of 49 (male and female) soldiers aged 19–30 years were randomly assigned to one of the two groups (intervention and control). The participants regularly underwent cold exposure for 8 weeks, in outdoor and indoor environments. Questionnaires were used to evaluate life satisfaction and anxiety, and an "InBody 770" device was used to measure body composition. Among other results, systematic exposure to cold significantly lowered perceived anxiety (p=0.032). Cold water exposure can be recommended as an addition to routine military training regimens and is likely to reduce anxiety among soldiers.

The article PDF file contains a link to a repository in which the authors originally placed an Excel file of their main dataset named "Dataset_ColdExposure_sorted_InBody.xlsx" (behind the link entitled "InBody - Body Composition"). I downloaded this file and explored it, and found some interesting things that complement the investigations of the article itself; these discoveries form the main part of this blog post.

Recently, however—probably in reaction to the authors being warned by Gideon or someone else that their dataset contained personally identifying information (PII)—this file has been replaced with one named "Datasets_InBody+WC_ColdExposure.csv". I will discuss the new file near the end of this post, but for now, the good news is that the file containing PII is no longer publicly available.

[[ Update 2023-03-11 23:23 UTC: Added new information here about the LSQ dataset, and—further along in this post—a paragraph about the analysis of these data. ]]

The repository also contains a data file called "Dataset_ColdExposure_LSQ.csv", which represents the participants' responses at two timepoints to the Life Satisfaction Questionnaire. I downloaded this file and attempted to match the participant data across the two datasets.

The structure of the main dataset file

The Excel file that I downloaded contains six worksheets. Four of these contain the data for the two conditions that were reported in the article (Cold and Control), one each at baseline and at the end of the treatment period. Within those worksheets, participants are split into male and female, and within each gender a sequence number starting at 1 identifies each participant. A fifth worksheet named "InBody Začátek" contains the baseline data for each participant, and a sixth, named "InBody1", appears to contain every data record for each participant, as well as some columns which, while mostly empty, appear designed to hold contact information for each person.

Every participant's name and date of birth is in the file (!)

The first and most important problem in the file as it was uploaded, and was in place until a couple of days ago, is that a lot of PII was left in there. Specifically, the file contained the first and last names and date of birth of every participant. This study was carried out in the Czech Republic, and I am not familiar with the details of research ethics in that country, but it seems to me to be pretty clear that it is not acceptable to conduct before-and-after physiological measurements on people and then publish those numbers along with information that in most cases probably identifies them uniquely among the population of their country.

I have modified the dataset file to remove this PII before I share it. Specifically, I did this:

- Replaced the names of participants with random fake names assembled from lists of popular English-language first and last names. I use these names below where I need to identify a particular participant's data.

- Replaced the date of birth with a fake date consisting of the same year, but a random month and day. As a result of this, the "Age" column, which appears to have been each participant's age at their last birthday before they gave their baseline data, may no longer match the reported (fake) date of birth.

What actually happened in the study?

The Abstract states (see above) that 49 participants were in two conditions: exposure to Cold (Chlad, in Czech) and a no-treatment Control group (Kontrolní). But in the dataset there are 99 baseline measurement records, and the participants are recorded as being in four conditions. As well as Cold and Control, there is a condition called Mindfulness (the English word is used), and another called Spánek, which means Sleep in Czech.

This is concerning because these additional participants and conditions are not mentioned in the article. The Method section states that "A total of 49 soldiers (15 women and 34 men) participated in the study and were randomly divided into two groups (control and intervention) before the start of the experiment". If the extra participants and conditions were part of the same study, this should have been reported; the above sentence, as written, seems to be stretching the idea of innocent omission quite a bit. Omitting conditions and participants is a powerful "researcher degree of freedom" in sense of Simmons et al.'s classic paper entitled "False-Positive Psychology". If these participants and conditions were not part of the same study then something very strange is happening, as it would imply that there were at least two studies being conducted with the same participant ID sequence number assignment and reported in the same data file.

Data seem to have been collected in two principal waves, January (leden) and March (březen) 2022. It is not clear why this was done. A few tests seem to have been performed in late 2021 or in February, April, or May 2022, but whatever the date, all participants were assigned to one of the two month groups. For reasons that are not clear, one participant whose baseline data were collected in December 2021 ("Martin Byrne") was assigned to the March group, although his data did not end up in the final group worksheets that formed the basis of the published article. Meanwhile, six participants ("George Fletcher", "Harold Gregory", "Harvey Barton", "Nicole Armstrong", "Christopher Bishop", and "Graham Foster") were assigned to the January group even though their data were collected in March 2022 or later; five of these (all except for "Nicole Armstrong") did end up in the final group worksheets. It seems that the grouping into "January" and "March" did not affect the final analyses, but it does make me wonder what the authors had in mind in creating these groups and then assigning people to them without apparently respecting the exact dates on which the data were collected. Again, it seems that plenty of researcher degrees of freedom were available.

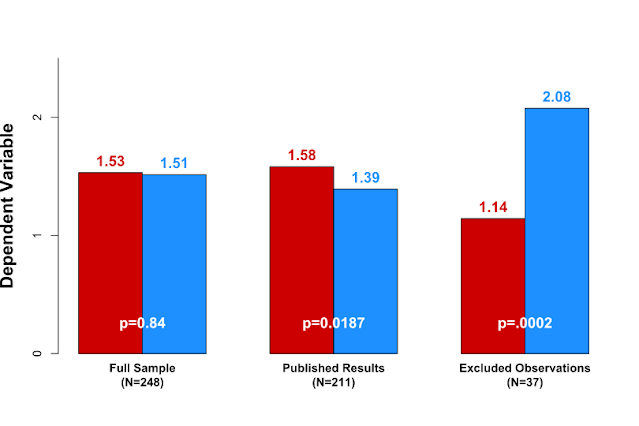

How were participants filtered out?

There are 99 records in the baseline worksheet. These are in conditions as follows: Chlad (Cold), 28 (18 “leden/January”, 10 “březen/March”); Kontrolní (Control), 41 (33 “leden/January”, 8 “březen/March”); Mindfulness, 11 (all “leden/January”); Spánek (Sleep), 19 (11 “leden/January”, 8 “březen/March”).

Of the 28 participants in the Cold condition, three do not appear in the final Cold group worksheets that were use for the final analyses. Of the 41 in the Control condition, 17 do not appear in the final Control group worksheets. It is not clear what criteria were used to exclude these 3 (of 28) or 17 (of 41) people. The three in the Cold condition were all aged over 30, which corresponds to the reported cutoff age from the article, but does rather suggest that this cutoff might have been decided post hoc. Of the 17 people in the Control condition who did not make it to the final analyses, 11 were aged over 30, but six were not, so it is even more unclear why they were excluded.

Despite the claim by the authors that participants were aged 19–30, four people in the final Cold condition worksheets ("Anthony Day", "Hunter Dunn", "Eric Collins", and "Harvey Barton") are aged between 31 and 35.

Were the authors participants themselves?

At least two, and likely five, of the participants appear to be authors of the article. I base this observation on the fact that in five cases, a participant has the same last name and initial as an author. In two of those cases, an e-mail address is reported that appears to correspond to the institution of that author. For the other three, I contacted a Czech friend, who used this website to look up the frequencies of the names in question; he told me that the last names (with any initials) only correspond to 55, 10, and 3 people in the entire Czech Republic, out of a population of 10.5 million.

Now, perhaps all of these people—one of whom ended up in the final Control group—are also active-duty military personnel, but it still does not seem appropriate for a participant in a psychological study that involves self-reported measures of one's attitudes before and after an intervention to also be an author on the associated article and hence at least implicitly involved with the design of the study. This also calls into question the randomisation and allocation process, as it is unlikely a randomised trial could have been conducted appropriately if investigators were also participants. (The article itself gives no detail about the randomisation process.)

Some of the participants in the final sample are duplicates (!)

The authors claimed that their sample (which I will refer to as the "final sample", given the uncertainty over the number of people who actually participated in the study) consisted of 49 people, which the reader might reasonably assume means 49 unique individuals. Yet, there are some obvious duplicates in the worksheets that describe the Cold and Control groups:

- The participant to whom I have assigned the fake name "Stella Arnold" appears both in the Cold group with record ID #7 and in the Control group with record ID #6, both with Gender=F (there are separate sequences of ID numbers for male and female participants within each worksheet, with both sequences starting at 1, so the gender is needed to distinguish between them). The corresponding baseline measurements are to be found in rows 9 and 96 of the "InBody Začátek" (baseline measurements) worksheet.

- The participant to whom I have assigned the fake name "Harold Gregory" appears both in the Cold group with record ID #15 and in the Control group with record ID #12, both with Gender=M. The corresponding baseline measurements are to be found in rows 38 and 41 of the "InBody Začátek" worksheet.

- The participant to whom I have assigned the fake name "Stephanie Bird" appears twice in the Control groups with record IDs #4 and #7, both with Gender=F. The corresponding baseline measurements are to be found in rows 73 and 99 of the "InBody Začátek" worksheet.

- The participant to whom I have assigned the fake name "Joe Gill" appears twice in the Control groups with record IDs #4 and #7, both with Gender=M. The corresponding baseline measurements are to be found in rows 57 and 62 of the "InBody Začátek" worksheet.

In view of this, it seems difficult to be certain about the actual sample size of the final (two-condition) study, as reported in the article.

Many other participants were assigned to more than one of the four conditions

36 of the 99 records in the baseline worksheet have duplicated names. Put another way, 18 people appear to have been enrolled in the overall (four-condition) study in two different conditions. Of these, five were in the Control condition in both time periods ("leden/January" and "březen/March"); four were in the Control condition once and a non-Control condition once; and nine were recorded as being in two non-Control conditions. In 17 cases the two conditions were labelled with different time periods, but in one case ("Harold Gregory"), both conditions (Cold/Control) were labelled "leden/January". This participant was one of the two who appeared in both final conditions (see previous paragraph); he is also one of the six participants assigned to a "January" group with data that were actually collected in March 2022.

Continuing on this point, two records in the worksheet ("InBody1") that contains the record of all tests, both baseline and subsequent, appear to refer to the same person, as the dates of the birth are the same (although the participant ID numbers are different) and the original Czech names differ only in the addition/omission of one character; for example, if the names were English, this might be "John Davis" and "John Davies". The fake names of these two records in the dataset that I am sharing are "Anthony Day" and "Arthur Burton", with "Anthony Day" appearing in the final worksheets and being 31 years old, as mentioned above. The height and other physiological data for these two records, dated two months apart, are similar but not identical.

Inconsistencies across the datasets

The LSQ data contains records for 49 people, with 25 in the Cold group and 24 in the Control group, which matches the main dataset. However, there are some serious inconsistencies between the two datasets.

First, the gender split of the Control group is not the same between the datasets. In the main dataset, and in the article, there were 17 men and 7 women in this group. However, in the LSQ dataset, there are 14 men and 10 women in the Control group.

Second, the ages that are reported for the participants in the LSQ data do not match the ages in the main dataset. There is not enough information in the LSQ dataset—which has its own participant ID numbering scheme—to reliably connect individual participants across the two, but both datasets report the age of the participants and so as a minimum it should be possible to find correspondences at that level. However, this is not the case. Leaving aside the three participants who differ on gender (which I chose to do by assuming that the main database was correct, since its gender split matches the article), there are 11 other entries in the LSQ dataset where I was unable to find a corresponding match on age in the main dataset. Of those 11, three differ by just one year, which could perhaps just be explained by the participant having a birthday between two data collection timepoints, but for the other eight, the difference is at least 3 years, no matter how one arranges the records.

In summary, the LSQ dataset is inconsistent with the main dataset on 14 out of 49 (28%) of its records.

Other curiosities

Several participants, including two in the final Control condition, have decimal commas instead of decimal points for their non-integer values. There are also several instances in the original datafile where cells in analysed columns have numbers recorded as text. It is not clear how these mixed formats could have been either generated or (conveniently) analysed by software.

Participants have a “Date of Registration”. It is not clear what this means. In 57 out of 99 cases, this date is the same as the date of the tests, which might suggest that this is the date when the participant joined the study, but some of the dates go back as far as 2009.

Data were sometimes collected on more than two occasions per participant (or on more than four occasions for some participants who were, somehow, assigned to two conditions). For example, data were collected four times from "Orlando Goodwin" between 2022-03-07 and 2022-05-21, while he was in the Cold condition (to add to the two times when data were collected from him between 2022-01-13 and 2022-02-15, when he was in the Sleep condition). However, the observed values in the record for this participant in the "Cold_Group_After" worksheet suggest that the last of these four measurements to be used was the third, on 2022-05-05. The purpose of the second and fourth measurements of this person in the Cold condition is thus unclear, but again it seems that this practice could lead to abundant researcher degrees of freedom.

There are three different formats for the participant ID field in the worksheet that contains all the measurement records. In the majority of the cases, the ID seems to be the date of registration in YYMMDD format, followed by a one- or two-digit sequence number, for example "220115-4" for the fourth participant registered on January 15, 2022. In some cases the ID is the letters "lb" followed by what appears to be a timestamp in YYMMDDHHMMSS format, such as "lb151210070505". Finally, one participant (fake name "Keith Gordon") has the ID "d14". This degree of inconsistency does not convey an atmosphere of rigour.

The new data file

I downloaded the original (XLSX format) data file on 2023-03-05 (March 5) at 20:52 UTC. That file (or at least, the link to it) was still there on 2023-03-07 (March 7) at 11:59 UTC. When I checked on 2023-03-08 (March 8) at 17:26 UTC the link was dead, implying that the file with PII had been removed at some intermediate point in the previous 30 hours. At some point after that a new dataset file was uploaded to the same location, which I downloaded on 2023-03-09 (March 9) at 14:27 UTC. This new file, in CSV format, is greatly simplified compare to the original. Specifically:

- The data for the two conditions (Cold/Control) and the two timepoints (baseline/end) have been combined into one sheet in place of four.

- The worksheets with the PII and other experimental conditions have been removed.

- Most of the data fields have been removed; I assume that the remaining fields are sufficient to reproduce the analyses from the paper, but I haven't checked that as it isn't my purpose here.

- Two data fields have been added. One of these, named "SMM(%)", appears to be calculated as the fraction of the participant's weight that is accounted for by their skeletal muscle mass, both of which were present in the initial dataset. However, the other, named "WC (waist circumference)", appears to be new, as I cannot find it anywhere in the initial dataset. This might make one wonder what other variables were collected but not reported.

Apart from these changes, however, the data concerning the final conditions (49 participants, Cold and Control) are identical to the first dataset file. That is, the four duplicate participants described above are still in there; it's just harder to spot them now without the baseline record worksheet to tie the conditions together.

Data availability

I have made two censored versions of the dataset available

here. One of these ("Simply_anonymized_dataset.xlsx") has been made very quickly from the original dataset by simply deleting the participants' names, dates of birth, and (where present) e-mail addresses. The other ("Public_analysis_dataset.xls") has been cleaned up from the original in several ways, and includes the fake names and dates of birth discussed above. This file is probably easier to follow if you want to reproduce my analyses. I believe that in both cases I have taken sufficient steps to make it impractical to identify any of the participants from the remaining information.At the same location I have also placed another file ("Compare_datasets.xls") in which I compare the data from the initial and new dataset files, and demonstrate that where the same fields are present, their values are identical.

If anyone wants to check my work against the original, untouched dataset file, which includes the PII of the participants, then please contact me and we can discuss it. There's no obvious reason why I should be entitled to see this PII and another suitably qualified researcher should not, but of course it would not be a good idea to share it for all to see.

Acknowledgements

My thanks go to:

- Gideon Meyerowitz-Katz (@GidMK) for interesting discussions and contributing a couple of the points in this post, including making the all-important discovery of the PII (and writing to the authors to get them to take it down).

- @matejcik for looking up the frequencies of Czech names.

- Stuart Ritchie for tweeting skeptically about the hyping of the results of the study.